Since its introduction in 2004, PCIe® has become the most popular interconnect for low-latency chip-to-chip connections. From its humble beginnings for fan-out interconnects, PCIe has been integrated into AI and cloud servers, JBOF storage systems, ADAS systems in automotive, industrial automation, PCs, and other platforms.

Scale-up AI servers—which can contain hundreds of processors spread over multiple racks—represent the next logical step for PCIe. Although far larger than today’s single chassis AI servers, scale-up servers demand the same thing from interconnect fabrics: coherent, low-latency links that enable fast, secure communication between components. PCIe’s status as a widely-used standard that evolves to meet customer demands further puts it in the forefront for scale-up.

Let’s explore the PCIe scale-up usage model and how these architectures will evolve.

PCIe Scale-up Usage Model

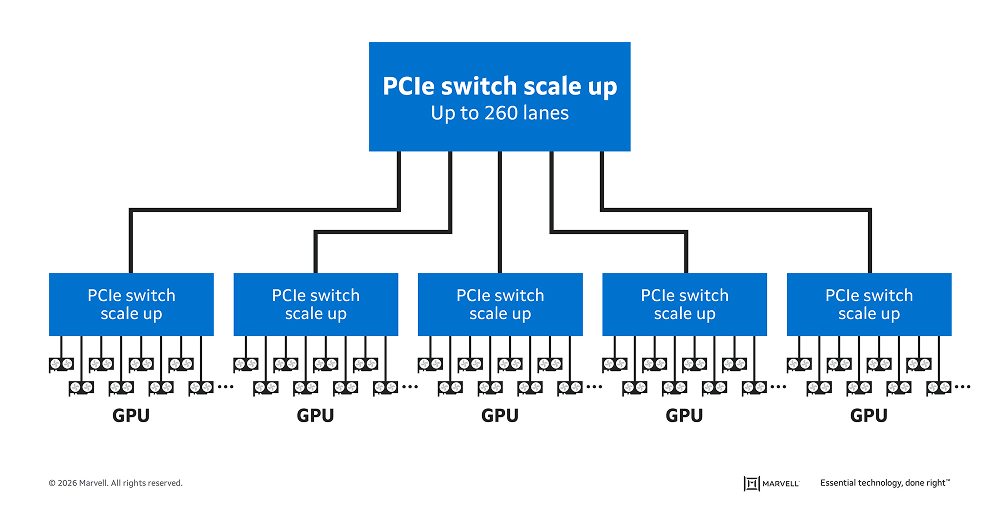

In the above figure, a PCIe switch connects to multiple PCIe switches, which in turn link to multiple GPUs for peer-to-peer (P2P) communication for minimal latency and maximum performance.

To support these environments, PCIe switches must provide:

The challenge is delivering on all of these requirements at once. Two of the most important requirements above—low latency and lower power—can be achieved by adopting a monolithic design for switch silicon. Communication between the die within a chiplet invariably increases latency and power, and this gets further compounded by the intense cross traffic between XPUs that is a signature of AI workloads. Large port counts and better scalability, by contrast, can arguably be more easily achieved by taking a chiplet approach because it gives designers a greater amount of beachfront.

The Marvell® StructeraTM S family of PCIe switches achieves all without compromise through its unique design and feature set. A monolithic device, Structera S eliminates the overhead of the chiplet approach to deliver lower power, latency and board space.

At the same time, it provides a foundation for best-in-class scalability. Structera S 50256 for PCIe 5.0 (formerly the XConn Technologies XC51256) features a market-leading 256 lanes operating at up to 32 gigatransfers per second per lane for 2,048GB/sec of aggregate bidirectional bandwidth.

Structera S 60260 for PCIe 6.0 extends this lead with a 260-lane design, giving it the highest radix in the industry for a PCIe switch and nearly 2x the lane density of competing products, along with over 4TB/sec of aggregate bidirectional bandwidth. This enables a single Structera S PCIe switch to connect to 16 x 16 PCIe 6.0 XPUs or 32 x 8 PCIe 6.0 XPUs, or, as shown in the diagram to additional PCIe switches to scale a system to hundreds of XPUs and beyond while minimizing latency and power.

The new switch samples to select customers in the third quarter. Marvell now is showing off Structera S 60260 in a live demo at OFC 2026.

A Rapid Generational Roadmap

Marvell has been at the forefront of PCIe development. Last year, Marvell demonstrated PCIe 7.0 operating at 128 gigatransfers per second per lane (G/T) for 512G of bidirectional bandwidth per lane over both optical and copper links.

In February, Marvell showed a PCIe 8.0 SerDes operating at 256GT at DesignCon. Expected to be finalized by 2028, the PCIe 8.0 specification is expected to double the bandwidth of the PCIe 7.0 specification for up to 1 TB/s of bidirectional bandwidth. In addition to the PCIe switch portfolio and rich in-house PCIe SerDes technology, Marvell offers PCIe/CXL retimers to give cable partners solutions for cross-rack AEC and AOC products.

PCIe, ESUN, UALink, CXL and the Memory Wall

Along with PCIe-based switches, Marvell is actively developing solutions based on ESUN and UALink to give customers the broadest set of solutions for optimizing their infrastructure. How these different standards converge and complement each other across infrastructures will shape the evolution of high-performance networking over the next decade.

PCIe switching will also play a role in overcoming AI’s memory wall by proving a foundation for CXL memory pooling. Marvell Structera S CXL switches are package-, pin-, and power-compatible with Structera S PCIe switches. This allows customers to design a single hardware platform for both applications while reducing development costs and design cycles.

See more here about the new CXL switch and Marvell CXL benchmarks for AI.

Conclusion

Scale-up represents the newest and fastest growing segment in networking. At the same time, many of the underlying pillars and principles of scale-up networking are quite familiar. PCIe will become one of the primary vehicles for ensuring the market can scale rapidly, safely and securely.

# # #

This blog contains forward-looking statements within the meaning of the federal securities laws that involve risks and uncertainties. Forward-looking statements include, without limitation, any statement that may predict, forecast, indicate or imply future events or achievements. Actual events or results may differ materially from those contemplated in this blog. Forward-looking statements are only predictions and are subject to risks, uncertainties and assumptions that are difficult to predict, including those described in the “Risk Factors” section of our Annual Reports on Form 10-K, Quarterly Reports on Form 10-Q and other documents filed by us from time to time with the SEC. Forward-looking statements speak only as of the date they are made. Readers are cautioned not to put undue reliance on forward-looking statements, and no person assumes any obligation to update or revise any such forward-looking statements, whether as a result of new information, future events or otherwise.

Tags: AI infrastructure, Optical Interconnect, Optical DSPs, DSP, data center interconnect, AI

Copyright © 2026 Marvell, All rights reserved.