At the Heterogeneous Composable and Disaggregated Systems (HCDS) Workshop, co-located with Architectural Support for Programming Languages and Operating Systems (ASPLOS), Senior Staff Engineer Jing Ding won Best Paper for her research on the Marvell® Photonic Fabric™ Technology Platform.

There is a critical mismatch between the capacity and bandwidth available across memory tiers and the demands of large-scale LLM inference, revealed through characterizing KV cache retrieval efficiency. In fact, across LLaMA3-8B to 405B on NVIDIA A100/H200 systems, retrieving KV cache from host memory achieves up to 100x speedup over GPU re-computation for contexts up to 4M tokens, but host DRAM capacity cannot accommodate the KV demands of long-context, multi-tenant and multi-turn workloads.

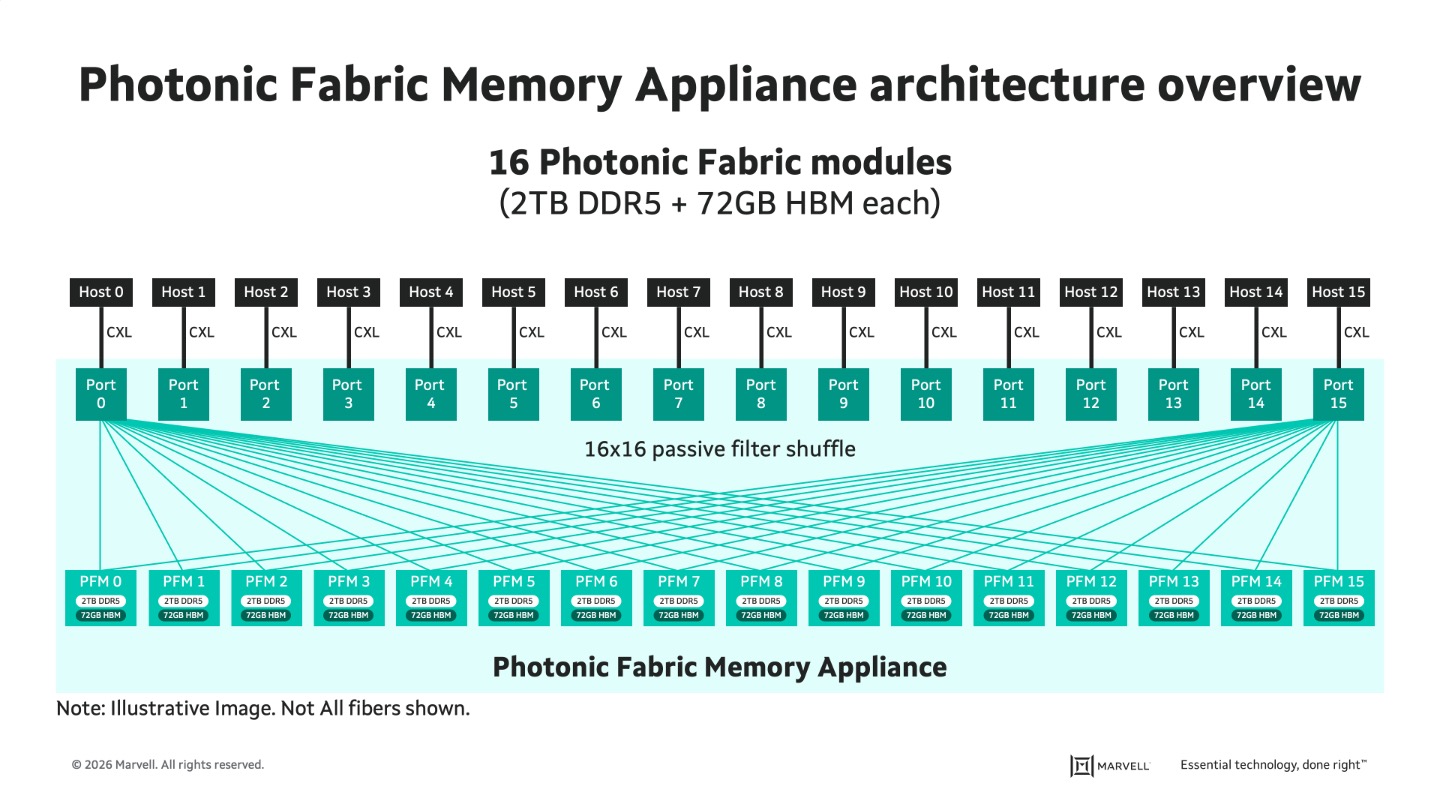

While CXL-enabled memory pooling could be applied in this capacity, it faces fundamental electrical interconnect limitations, namely rack-scale distance constraints, switch contention under multi-host workloads, and power-thermal scaling challenges. By leveraging the Photonic Fabric™ optical interconnect technology platform to break reach limitations, along with CXL as a host communication protocol, Marvell enables a unique pod-scale memory sharing appliance that can enable up to 16 servers across multiple racks to dynamically share up to 32 TB of memory capacity.

Ding and her co-author Senior Director of Photonic Fabric Software Trung Diep, in their paper “Bridging the Memory Hierarchy Gap in LLM Inference with Photonic Fabric™ Architecture,” show that the photonic interconnects’ distance-independent power and thermal decoupling enable true rack-scale disaggregation. It supports 16x higher concurrent user density with 100x time-to-first-token improvement at 100K contexts and 370x at 1M contexts for LLaMA3-405B.

As AI models scale, memory, not compute, has become the primary bottleneck to performance and efficiency. This work addresses one of the most fundamental challenges in modern AI systems: scaling memory capacity and bandwidth to keep pace with increasingly large models and workloads. By enabling faster access to larger, shared memory pools, it unlocks higher system utilization, lower latency, and improved performance, making it possible to support longer context windows, higher user concurrency, and more efficient AI infrastructure without unsustainable increases in power and cost.

Jing Ding is an accomplished AI infrastructure and machine learning engineer and research scientist with a Ph.D. from North Carolina State University. She worked at Celestial AI, which was recently acquired by Marvell, where she focused on photonic interconnect architectures for AI memory disaggregation and hardware-software co-design for LLM training and inferences. Now, at Marvell, she continues to advance photonic fabric technology for next-generation AI infrastructure as part of the Data Center Group.

The HCDS workshop aims at evaluating novel research on composable disaggregated systems and their integration with operating systems and software runtimes, maximizing workload deployment efficiency and enhancing user experience. HCDS provide a system design approach to reduce imbalances between workload resource requirements and the availability of computing system resources. They also provide an opportunity for ingenuity in processes communication and data exchange.

# # #

This blog contains forward-looking statements within the meaning of the federal securities laws that involve risks and uncertainties. Forward-looking statements include, without limitation, any statement that may predict, forecast, indicate or imply future events or achievements. Actual events or results may differ materially from those contemplated in this blog. Forward-looking statements are only predictions and are subject to risks, uncertainties and assumptions that are difficult to predict, including those described in the “Risk Factors” section of our Annual Reports on Form 10-K, Quarterly Reports on Form 10-Q and other documents filed by us from time to time with the SEC. Forward-looking statements speak only as of the date they are made. Readers are cautioned not to put undue reliance on forward-looking statements, and no person assumes any obligation to update or revise any such forward-looking statements, whether as a result of new information, future events or otherwise.

Tags: award, Company News

Copyright © 2026 Marvell, All rights reserved.