Shared storage performance has significant impact on overall system performance. That’s why system administrators try to understand its performance and plan accordingly. Shared storage subsystems have three components: storage system software (host), storage network (switches and HBAs) and the storage array.

Storage performance can be measured at all three levels and aggregated to get to the subsystem performance. This can get quite complicated. Fortunately, storage performance can effectively be represented using two simple metrics: Input/Output operations per Second (IOPS) and Latency. Knowing these two values for a target workload, a user can optimize the performance of a storage system.

Let’s understand what these key factors are and how to use them to optimize of storage performance.

What is IOPS?

IOPS is a standard unit of measurement for the maximum number of reads and writes to a storage device for a given unit of time (e.g. seconds). IOPS represent the number of transactions that can be performed and not bytes of data. In order to calculate throughput, one would have to multiply the IOPS number by the block size used in the IO.  IOPS is a neutral measure of performance and can be used in a benchmark where two systems are compared using same block sizes and read/write mix.

IOPS is a neutral measure of performance and can be used in a benchmark where two systems are compared using same block sizes and read/write mix.

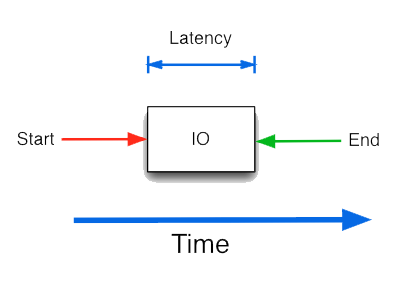

What is a Latency?

Latency is the total time for completing a requested operation and the requestor receiving a response. Latency includes the time spent in all subsystems, and is a good indicator of congestion in the system.  IOPS is a neutral measure of performance and can be used in a benchmark where two systems are compared using same block sizes and read/write mix.

IOPS is a neutral measure of performance and can be used in a benchmark where two systems are compared using same block sizes and read/write mix.

What is a Latency?

Latency is the total time for completing a requested operation and the requestor receiving a response. Latency includes the time spent in all subsystems, and is a good indicator of congestion in the system.

Find more about Marvell’s QLogic Fibre Channel adapter technology at:

https://www.marvell.com/fibre-channel-adapters-and-controllers/qlogic-fibre-channel-adapters/

Copyright © 2026 Marvell, All rights reserved.