By Robert Friend, Principal Engineer, Enterprise Network Marketing, Marvell

Multivendor environments have been the norm for decades to accelerate innovation, lower costs, and enable customers to optimize infrastructure with best-of-breed technologies.

But are there still hidden advantages going with a vertically integrated vendor?

Marvell, Dell and Cisco set out to answer this question in storage networking by submitting two SAN solutions to Tolly, a technical benchmarking service: the Dell PowerStore 32G Enterprise Storage Solution1 and the Dell PowerMax 64G Enterprise Storage Solution.2 The solutions combine Marvell® QLogic® 32G and 64G Fibre Channel HBAs with Dell PowerEdge 17G servers, Connectrix MDS Switches and Directors, as well as PowerStore and PowerMax storage.

Tolly found the systems delivered on seamless interoperability. More importantly, the technologies from the individual vendors such as highlighting the SAN congestion mitigation and comprehensive security.

Better together, in other words, is better. The set-up and the test results are below.

FPINs and Virtual Lanes Offer Interoperability and Compatibility

The solutions offer comprehensive 32/64G Fibre Channel (FC) interoperability, making the solution easy to adopt for storage networking applications in the data center.

The features are also plug-and-play compatible for seamless experience. The Marvell Fabric Performance Impact Notification frames (FPINs) and Virtual Lanes (VLs) for SAN endpoint connections, for example, pair well with Connectrix MDS’ Dynamic Ingress Rate Limiting (DIRL) inside the FC storage area network (SAN). Marvell HBAs uniquely offer these VLs, which are compatible with all FC switches, including solution partner Cisco.

Here at Marvell, we talk frequently to our customers and end users about I/O technology and connectivity. This includes presentations on I/O connectivity at various industry events and delivering training to our OEMs and their channel partners. Often, when discussing the latest innovations in Fibre Channel, audience questions will center around how relevant Fibre Channel (FC) technology is in today’s enterprise data center. This is understandable as there are many in the industry who have been proclaiming the demise of Fibre Channel for several years. However, these claims are often very misguided due to a lack of understanding about the key attributes of FC technology that continue to make it the gold standard for use in mission-critical application environments.

From inception several decades ago, and still today, FC technology is designed to do one thing, and one thing only: provide secure, high-performance, and high-reliability server-to-storage connectivity. While the Fibre Channel industry is made up of a select few vendors, the industry has continued to invest and innovate around how FC products are designed and deployed. This isn’t just limited to doubling bandwidth every couple of years but also includes innovations that improve reliability, manageability, and security.

By Todd Owens, Field Marketing Director, Marvell

While Fibre Channel (FC) has been around for a couple of decades now, the Fibre Channel industry continues to develop the technology in ways that keep it in the forefront of the data center for shared storage connectivity. Always a reliable technology, continued innovations in performance, security and manageability have made Fibre Channel I/O the go-to connectivity option for business-critical applications that leverage the most advanced shared storage arrays.

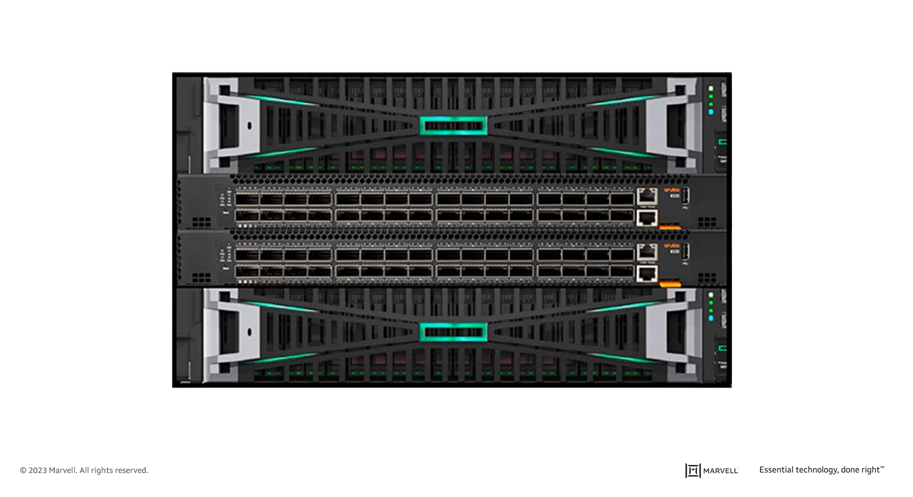

A recent development that highlights the progress and significance of Fibre Channel is Hewlett Packard Enterprise’s (HPE) recent announcement of their latest offering in their Storage as a Service (SaaS) lineup with 32Gb Fibre Channel connectivity. HPE GreenLake for Block Storage MP powered by HPE Alletra Storage MP hardware features a next-generation platform connected to the storage area network (SAN) using either traditional SCSI-based FC or NVMe over FC connectivity. This innovative solution not only provides customers with highly scalable capabilities but also delivers cloud-like management, allowing HPE customers to consume block storage any way they desire – own and manage, outsource management, or consume on demand. HPE GreenLake for Block Storage powered by Alletra Storage MP

HPE GreenLake for Block Storage powered by Alletra Storage MP

At launch, HPE is providing FC connectivity for this storage system to the host servers and supporting both FC-SCSI and native FC-NVMe. HPE plans to provide additional connectivity options in the future, but the fact they prioritized FC connectivity speaks volumes of the customer demand for mature, reliable, and low latency FC technology.

By Nishant Lodha, Director of Product Marketing – Emerging Technologies, Marvell

While age is just a number and so is new speed for Fibre Channel (FC), the number itself is often irrelevant and it’s the maturity that matters – kind of like a bottle of wine! Today as we make a toast to the data center and pop open (announce) the Marvell® QLogic® 2870 Series 64G Fibre Channel HBAs, take a glass and sip into its maturity to find notes of trust and reliability alongside of operational simplicity, in-depth visibility, and consistent performance.

Big words on the label? I will let you be the sommelier as you work through your glass and my writings.

By Todd Owens, Field Marketing Director, Marvell and Jacqueline Nguyen, Marvell Field Marketing Manager

Storage area network (SAN) administrators know they play a pivotal role in ensuring mission-critical workloads stay up and running. The workloads and applications that run on the infrastructure they manage are key to overall business success for the company.

Like any infrastructure, issues do arise from time to time, and the ability to identify transient links or address SAN congestion quickly and efficiently is paramount. Today, SAN administrators typically rely on proprietary tools and software from the Fibre Channel (FC) switch vendors to monitor the SAN traffic. When SAN performance issues arise, they rely on their years of experience to troubleshoot the issues.

What creates congestion in a SAN anyway?

Refresh cycles for servers and storage are typically shorter and more frequent than that of SAN infrastructure. This results in servers and storage arrays that run at different speeds being connected to the SAN. Legacy servers and storage arrays may connect to the SAN at 16GFC bandwidth while newer servers and storage are connected at 32GFC.

Fibre Channel SANs use buffer credits to manage the prioritization of the traffic flow in the SAN. When a slower device intermixes with faster devices on the SAN, there can be situations where response times to buffer credit requests slow down, causing what is called “Slow Drain” congestion. This is a well-known issue in FC SANs that can be time consuming to troubleshoot and, with newer FC-NVMe arrays, this problem can be magnified. But these days are soon coming to an end with the introduction of what we can refer to as the self-driving SAN.